It’s Day 2 of the first week of the LCS. Dignitas are playing Cloud9 in the second game of the day. After a cautious level 1 a double lane-swap emerges with LemonNation on Thresh and Sneaky on Ashe trying to fast push the top-lane against KiWiKiD’s Kalista and CoreJJ’s Nautilus. C9 are playing agressively, even disrespectfully; Lemon has already blown his flash to an early gank from Azingy on Zac. KiWiKiD slinks around the C9 minion line and lands a hook on Sneaky, LemonNation flubs his Flay and CoreJJ starts filling the C9 ADC full of spears. In the river Azingy prepares to slingshot himself into the fray. Sneaky, down to a quarter of his health, flashes to safety. But Azingy predicts the flash and launches the Frost Archer into the air. CoreJJ flashes forward to finalize the kill but the First Blood gold goes to Azingy. 4 minutes in and Dignitas already have a solid lead.

Cloud9 went on to lose that game, but it wasn’t because of the First Blood. At least, according to popular belief, it was because of the words that appeared on screen immediately after: 8% #DIGWIN – 92% #C9WIN. According to this rift legend teams that earn over 90% of the Twitter vote, conducted live by Riot among the fans watching the game, are curse and will go on to lose.

I looked at fan votes from the 2015 Spring LCS in both NA and EU and I learned that there’s a kernel of truth to the belief but also a healthy dose of selective memory.

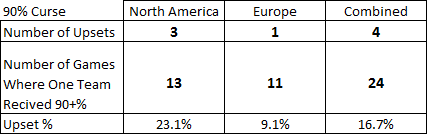

First the head line results. How often did teams who earned 90% of the fan vote lose?

As you can see games where a team received more than 90% of the vote are relatively rare. Only 24 of 180 games (13%) saw that result. And upsets aren’t actually that common; in the Spring split the 90% curse only struck 4 times in those 24 games.

But that isn’t really conclusive; the 90% curse doesn’t mean that a team is guaranteed to lose. It is supposed to mean that the vote makes a team do worse. But it’s hard to know what ‘worse’ means if we don’t have any idea about how regular, un-cursed, fan votes relate to teams’ winning chances. Does a team that gets 89% of the fan vote really do better than one that gets 91%? To answer that question I made the following two charts:

The idea behind these charts is pretty simple: take all of the votes that fall between 50% and 60% and see how many times the favoured team won. Then compare that to the ideal result (which would be about 55%). As you can see in both regions teams that received over 90% of the vote not only did better than teams that received over 80%, they did the best of any group. Generally speaking the results are reasonably close, notwithstanding a few glaring errors (the fans are particularly bad at figuring out how close games will go).

However, in Europe 90% teams did barely any better than 80% teams, when they would be expected to do quite a bit better. And in neither region did 90%+ teams win even 95% of games. So maybe there is a hint of a curse at work there.

The next page looks into some interesting results that were produced when looking into the curse.

One question that interested me is how accurate the fan votes were as a strict prediction. Were they useful at all in figuring out who would win? To answer this something a bit more nuanced that just the number of games that were guessed correctly is needed. This is because saying TSM had a 51% chance of beating Coast is very different from saying that they have a 99% chance of winning.

In order to determine how accurate the fan vote is over all I used a fairly simple metric called a Brier Score. This is used to turn predictions, expressed in percentages, and results into a measure of accuracy. The point of this was to figure out whether the fan vote is anything more than a popularity contest; whether it offers any useful information in predicting the outcome of contests.

The chart above compares two means of predicting the outcomes of LCS games; ‘Vote’ and ‘Points’. The first, ‘Vote’, is the fan vote. It was right 62% of the time in EU and 66% in NA and received a brier score of about .23 and .22 in each region. To put that into context, if you said that every single game was a 50/50 you would receive a score of .25. So the fan votes aren’t that informative; they aren’t wrong (they get a fair majority of outcomes right), they just aren’t that right.

I’m always interested in comparing complex or holistic prediction methods against simple heuristic ones. That was the point of including the ‘Points’ predictor. The predictor is, as you’ve no doubt guessed, based on how many points each team has accumulated when they played a given game. If they were tied then it was treated as a 50% prediction. For every point on team was ahead of the other, 5% was added to that team’s chances of wining (up to 100%; thanks Coast for making me add that caveat). As you can see this metric was almost as accurate as the fan vote.

As you can see NA is was more predictable than EU this year. But there weren’t just differences between the regions; there were also differences between the teams. The following table lists the Brier scores for games involving each team ranked from most to least predictable.

Coast, unsurprisingly, was the most predictable team and the Unicorns were the least. The fans would have done better if they’d decided their predictions about the Unicorns with a coin toss.

The next table shows which teams were most underestimated by the fans (those at the top) and which were the most overestimated (those at the bottom).

Impulse and H2K consistently surprised fans with how good they were. In contrast people never quite let go of their memories of Alliance when it came to thinking about Elements.

Published: Jun 23, 2015 09:55 pm