Twitch released its first Transparency Report today. The in-depth report reviewed everything the platform did to improve safety and moderation on the platform last year.

While given the label “Transparency,” the 5,000-word report was less about revealing hidden details and more about reviewing the platform’s performance.

In the past year, Twitch has made numerous changes to its community guidelines regarding things like nudity, violence, harassment, and hateful conduct.

Due partially to the COVID-19 pandemic, the platform saw a surge in viewership in 2020 that resulted in the platform posting 18.6 billion hours watched, up nearly 70 percent from the year prior, according to stats from SullyGnome.

Such growth naturally means that there’s a need for protection from harassment on an online platform like Twitch.

With a handful of charts, the report showed how Twitch saw an increase in reports of misbehavior as well as enforcement for misconduct from the first half of 2020 to the second.

The report also noted the general approach Twitch takes to safety with a large tiered diagram. It even mentioned other measures the platform has taken to emphasize safety and inclusion, like the creation of an eight-member Safety Advisory Council.

Perhaps the biggest fallback of the report is that it’s called a “transparency” report, something that Twitch is still lacking in certain areas—particularly in terms of streamer suspensions.

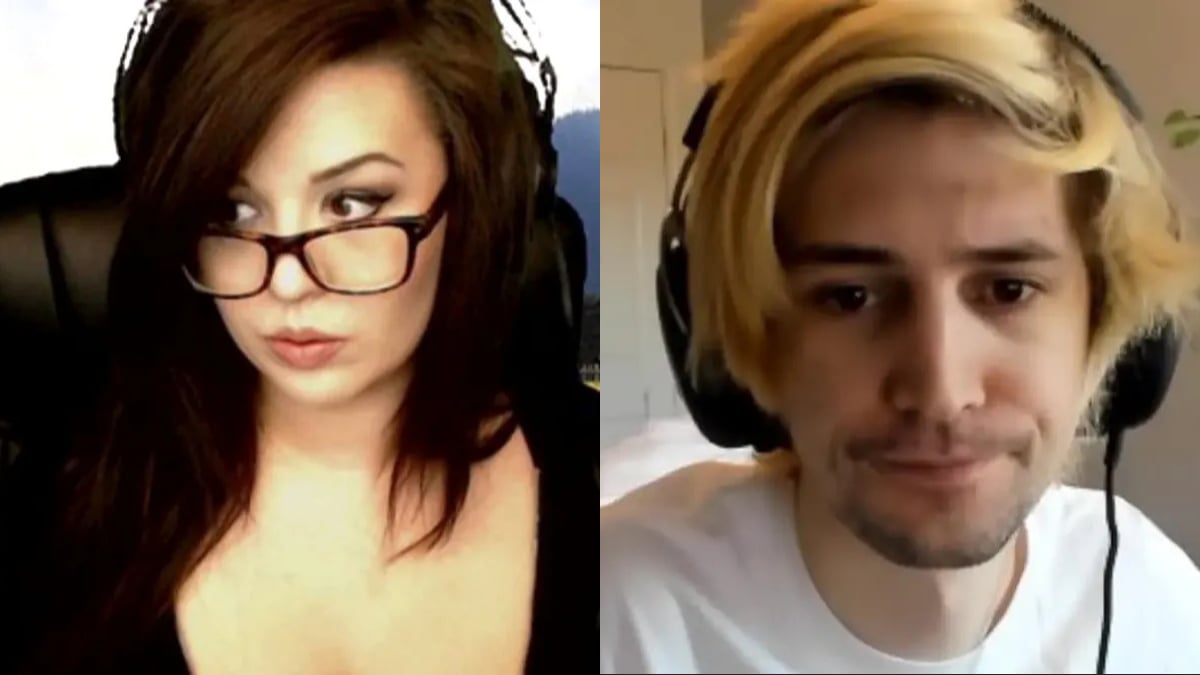

Twitch doesn’t comment on streamer suspensions and it’s not uncommon for content creators to get banned for a period of time without necessarily knowing exactly what it was that got them taken down.

While this report explained some of the processes that result in a channel suspension, there was nothing in it that suggested the platform intends on being more transparent in explaining bans to streamers or the public.